What is Kubernetes?

Kubernetes is a very popular open source platform for container orchestration. That means the Kubernetes platform is used for managing applications built from multiple, mostly standalone runtimes called containers.

Since the launch of the Docker Container Project in 2013, containers have become increasingly popular. As container’s use increased over time, large and distributed container applications have become increasingly difficult to coordinate. Just for this reason Kubernetes appeared and launched a revolution. Kubernetes has become a key part of the container revolution because it is making container applications drastically easier to manage at scale. Kubernetes is most often used with Docker (Docker is the most popular containerization platform). Kubernetes can also work with any container system that conforms to the Open Container Initiative (OCI) (OCI is standards for container image formats and runtimes). As mentioned above Kubernetes is open source and because of that has relatively few restrictions on how it can be used. Kubernetes can be used freely by anyone who wants to run containers, also, it can be run in the public cloud or on-premises, or both.

What are Containers?

Container technology gets its name from the shipping industry. Instead of devising a unique way of sending each product, the goods are placed in steel shipping containers, which are already designed to be lifted by the crane at the dock and put on board designed to accommodate standard sized containers. In short, by standardizing the process and holding the item, the container can be moved as a unit, and it costs less to do it this way.

Container is a technology method to package an application so it can be run, with its dependencies, isolated from other processes.

With container computer technology, this is an analogous situation. Did it ever happen that a program on one machine worked perfectly, but then turned into a clumsy mess when moved to the next? This can happen when the software is migrated from the developer’s computer to a test server or physical server from the company’s data center, to a cloud server. There is a problem moving the software due to differences between the machine environment, such as installed OS, SSL libraries, network topology, security, and storage.

Just as a crane takes an entire container as a unit to put on a boat or truck for transportation, which makes it easy to move, computer container technology does the same. Container technology not only contains software, but also dependencies, including libraries, binaries, and configuration files, together, and they migrate as a unit, avoiding machine differences, including OS differences and basic hardware that lead to incompatibilities and crashes. Containers also make it easier to deploy software to the server.

Containerized microservices architectures have profoundly changed the way development and operational teams test and deploy modern software. Containers are helping companies modernize by facilitating scaling and deployment of applications, but containers have also introduced new challenges and complexity by creating a whole new infrastructure ecosystem.

Large and small software companies now place thousands of copies of containers daily, which is the complexity of the scale they have to manage. This is the reason why they use Kubernetes.

Why use Kubernetes?

Kubernetes is originally developed by Google and as we mention above is an open-source container orchestration platform designed to automate the deployment, scaling, and management of containerized applications. Definitely we can say that the Kubernetes has established itself as the standard for container orchestration and is the major project of the Cloud Native Computing Foundation (CNCF). Kubernetesis supported by the most popular companies in the IT industry like Google, Microsoft, IBM, AWS, Cisco, Intel and Red Hat.

Here are the 4 most important reasons why more and more companies are using Kubernetes:

Kubernetes and containers allow much better use of resources as opposed to hypervisors and VMs, because the containers are light in weight thanVMs, they require less memory, CPU, and other resources to operate.

Kubernetes runs on Google Cloud Platform (GCP), Amazon Web Services (AVS), Microsoft Azure and you can run it on-premise. You also can move loads without having to redesign your applications or completely revise your infrastructure – allowing you to standardize on the platform and avoid locking vendors.

Major cloud service providers offer numerous Kubernetes-as-a-Service deals. Google Cloud Kubernetes Engine, Azure Kubernetes Service (AKS), Amazon EKS, Red Hat OpenShift and IBM Cloud Kubernetes Service all fully manage the Kubernetes platform so you can focus on what’s most important to you – uploading applications that delight your users.

Kubernetes allows you to deliver a self-service platform as a service (PaaS) that creates an abstraction of the hardware layer for development teams. Your development teams can quickly and efficiently request the resources they need. If they need more resources to handle with the extra workload, they can get it just as quickly because the resources come from infrastructure that is shared across all your teams.

How Kubernetes work?

Kubernetes makes it much easier to deploy and handle applications in a microservices architecture. It does this by creating an abstraction layer on top of the host group so that development teams can deploy their applications and let Kubernetes manage all the important processes.

Processes managed by Kubernetes are:

Kubernetes works by having a central component that is actually a cluster. A cluster consists of many virtual or physical machines that each serve as a specialized function or as a master or node. Each node hosts a group of one or more containers (containing your applications), and the master communicates with the nodes about when to create or destroy containers. At the same time, it tells the nodes how to redirect traffic based on new container alignments.

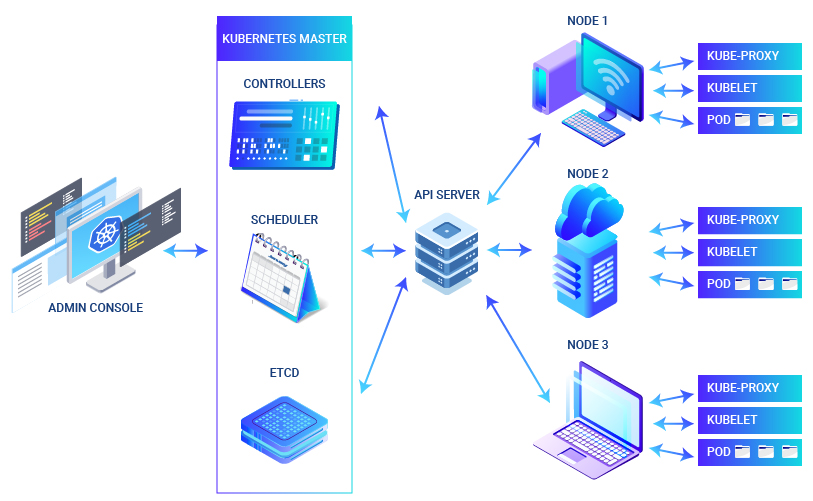

Following picture below shows Kubernetes cluster architecture.

Kubernetes Master

The Kubernetes master is the main point from which administrators and other users interact with the cluster to manage deployment of containers and scheduling. The cluster will always have at least one major, but there may be more than one major, depending on the cluster replication pattern.The master stores all the status and configuration information for the entire cluster in ectd, persistent and distributed key and value data warehouse. Each node has access to ectd and through it the nodes learn how to maintain the container configurations they use. You can run etcd on the Kubernetes master or in standalone configurations.

The kube-apiserver is used for communication with Master and rest of the cluster. The kube-apiserver makes sure that configurations in etcd match with configurations of containers deployed in the cluster.

The kube-controller-manager handles control loops that manage the state of the cluster via the Kubernetes API server. Deployments, replicas, and nodes have controls handled by this service. For node registration and monitoring his health throughout its life cycle responsible is node controller.

The kube-scheduler purpose is to track and manage Node workloads in the cluster. This service keeps track of the capacity and resources of nodes and assigns work to nodes based on their availability.

The cloud controller-manager is a service that runs in Kubernetes and helps it stay “cloud-agnostic.” Cloud-controller-manager serves as an abstraction layer between the API and the cloud vendor tools (for example, loading balance or storage volume) and their representative counterparts in Kubernetes.

Kubernetes Nodes and Pods

All nodes in the Kubernetes cluster must be configured with the runtime of the container, which is usually Docker. Container runtime starts and manages containers as Kubernetes has deployed them into nodes in the cluster. Applications such as web servers, databases, API servers, etc. are running inside the container.

A Kubelet is an agent process by which all Kubernetes nodes are run and is responsible for managing the state of the node: starting, stopping, and maintaining application containers based on instructions from the control plane. The kubelet collects performance and health information from the node, pods and containers it runs and shares that information with the control plane to help it make scheduling decisions.

The kube-proxy is a network proxy that runs on nodes in the cluster. It also works as a load balancer for services running on a node.

Podis a basic scheduling unit. Pod consists of one or more containers guaranteed to be co-located on the host machine and can share resources.Each pod has own IP address within the cluster and allowing the application to use ports without conflict.A pod can define one or more volumes, such as a local disk or a network disk, and expose them to containers in the pod, allowing different containers to share storage space. Volumes can be used when one container picks up content and another container transfers that content elsewhere.

Pod Spec is used to describe the desired state of the containers in a pod through a YAML or JSON.

Kubernetes Benefits

Related Posts |